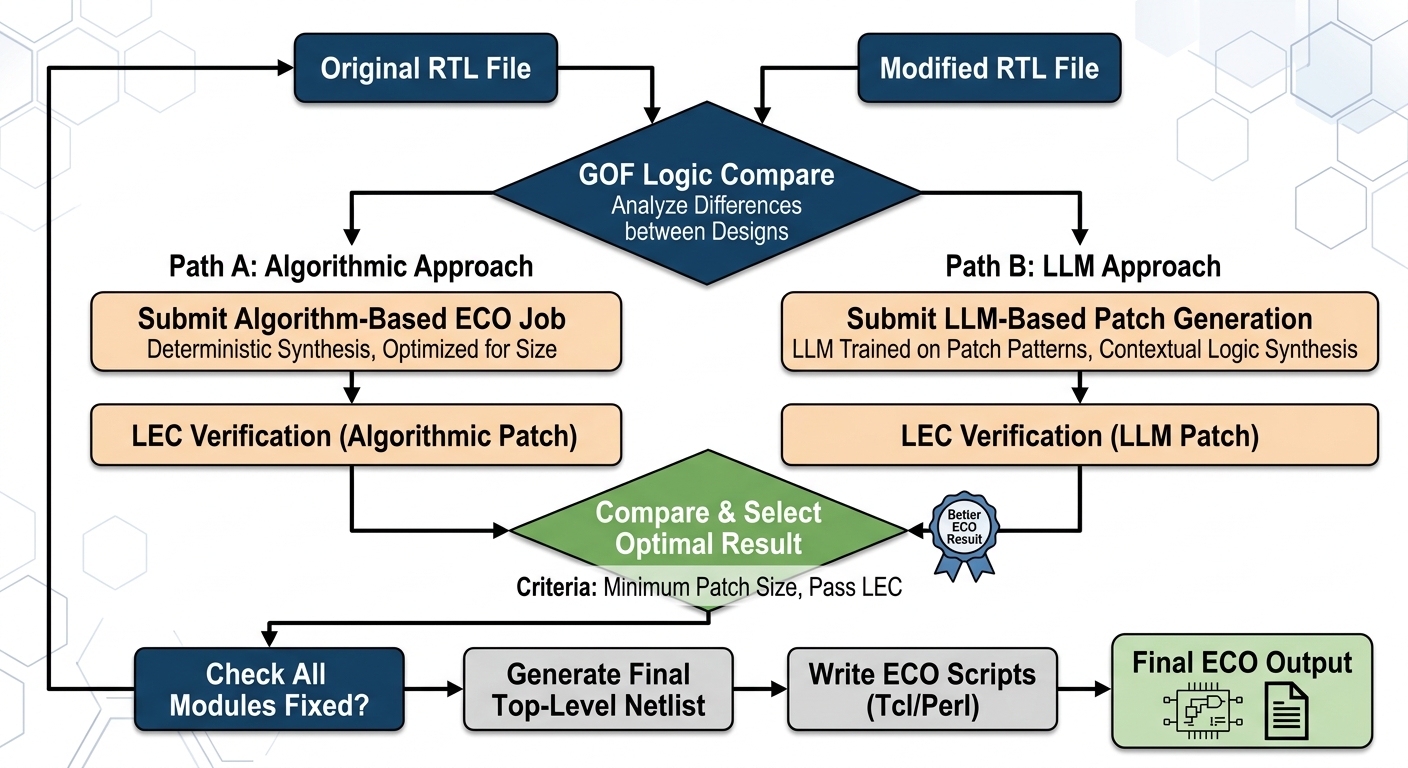

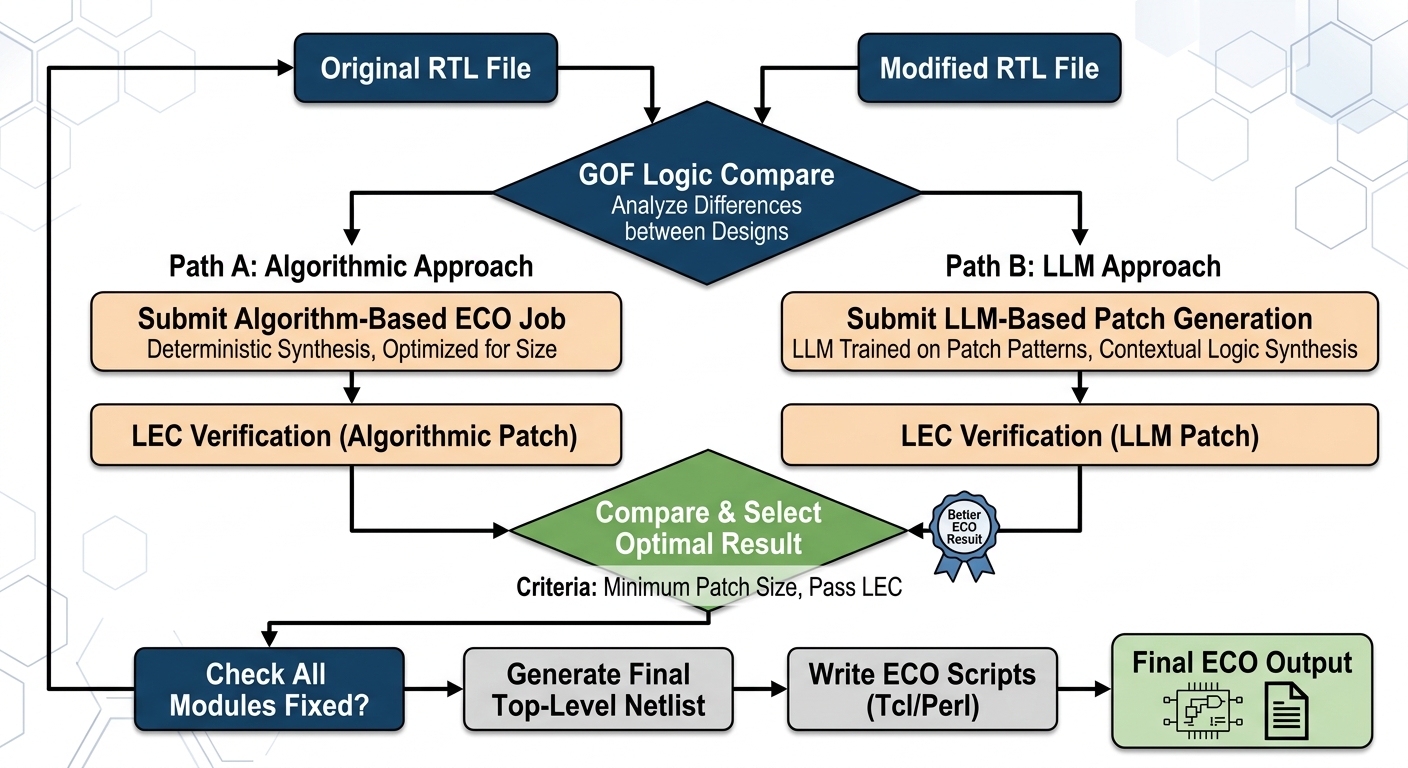

Figure 1: The original, linear ECO flow. Path B (LLM Approach) is a single, one time step, with the primary goal being a passing LEC and minimal size.

In the high-stakes world of electronic design automation (EDA), achieving peak performance is a constant battle. Even minor tweaks during the Engineering Change Order (ECO) phase can have significant ramifications. Recently, a breakthrough technique has emerged: incorporating multiple trials into the Large Language Model (LLM) ECO fix process. The results? A remarkable 60% increase in performance.

Traditionally, ECO fixes are about precision and minimization. You want to fix a functional issue without introducing new problems or expanding the circuit size unnecessarily. The primary metrics were often "minimum patch size" and "passing Logic Equivalence Check (LEC)." While critical, this focus sometimes came at the expense of potential performance optimization. An ECO fix might work, but was it the *fastest* possible solution?

Figure 1: The original, linear ECO flow. Path B (LLM Approach) is a single, one time step, with the primary goal being a passing LEC and minimal size.

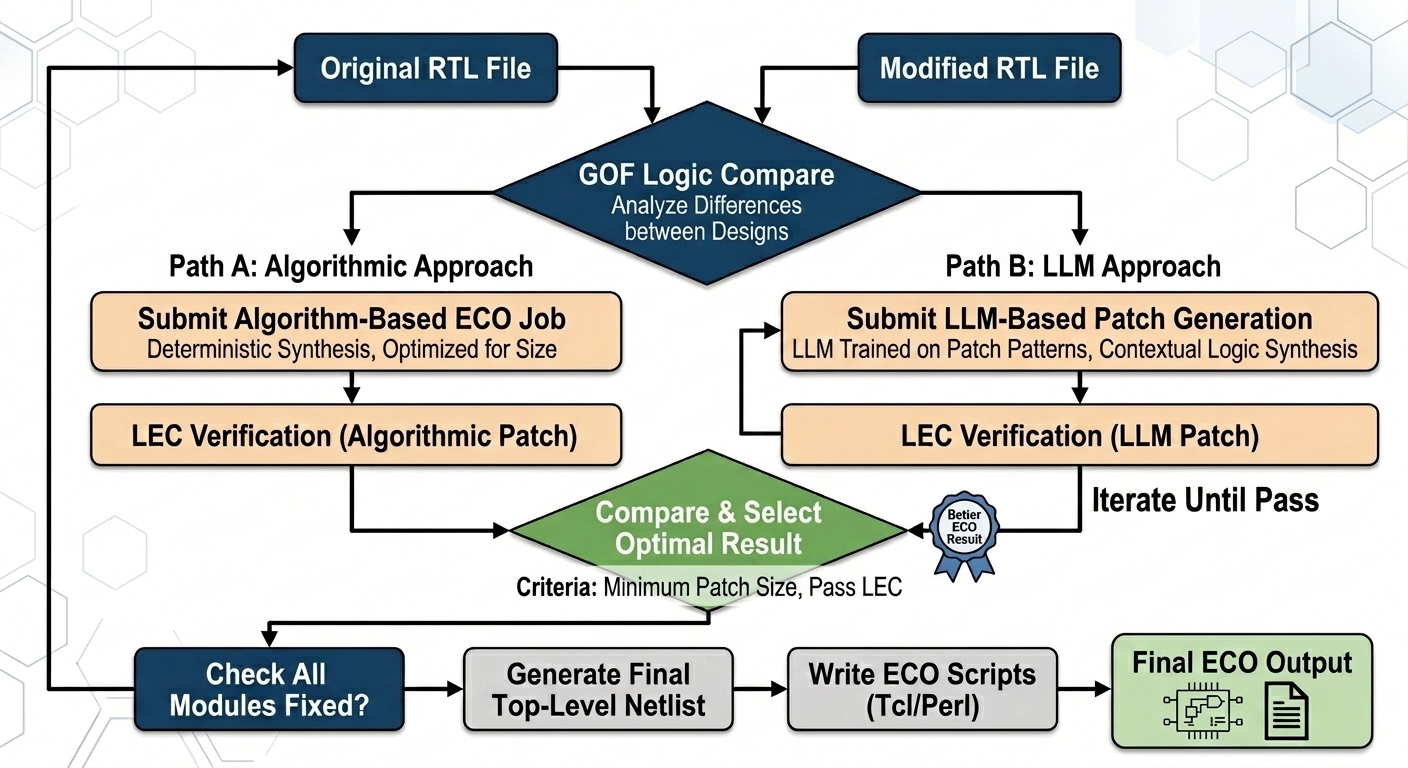

Figure 2: The enhanced, multi-trial ECO flow. The addition of the "Iterate Until Pass" loop in Path B (LLM Approach) allows the model to explore multiple, functionally correct solutions to find the performance winner.

The innovation lies in leveraging the generative capabilities of LLMs within a structured, multi-trial framework. Instead of asking the LLM to generate just *one* valid fix, the process is re-architected.

Image 1 (Original Flow) depicts a linear approach. For both Path A (algorithmic) and Path B (LLM), a single ECO is generated, verified, and then compared primarily on size. If the fix passes, that's often where the optimization ends. The goal was correctness and size.

Image 2 (Multi-Trial Flow) shows the transformative change. Notice the new loop on Path B: "Iterate Until Pass." This isn't just a simple retry loop; it's a loop designed to generate a *variety* of valid, functionally correct patches. This allows the system to generate multiple candidates, all of which are valid and can be formally verified. The key is that they are *different* implementations.

The primary benefit comes from adding "Performance" as a co-equal criteria in the 'Compare & Select Optimal Result' block. Now, the comparison isn't just between the algorithmic result and a single LLM result. It's between the algorithmic result and *the best performing result from the multiple LLM trials*.

The 60% performance boost isn't just a statistic; it represents a major competitive advantage. By moving from a linear "find one fix" methodology to a generative "explore many fixes and find the best one" approach, we are able to unlock performance potential that was previously hidden. This confirms that the generative nature of LLMs, combined with a loop of multiple, verifiable trials, is a powerful new tool in the EDA engineer's arsenal, allowing us to build faster, more efficient chips.